In 2019, Microsoft CEO Satya Nadella told the Mobile World Congress that “every company is now a software company.” Nadella believes a company’s performance in a connected, digital world depends on its software setup. Software, infrastructure and other digital tools govern website performance, order responses, inventory management and much more.

The problem for small and medium-sized businesses (SMBs) is knowing where to start. With so many options available, choosing the right software, hardware, cloud storage services and other platforms can be challenging. However, teaming up with an information technology (IT) partner can help SMBs implement and maintain the right tech solutions to fuel growth and success.

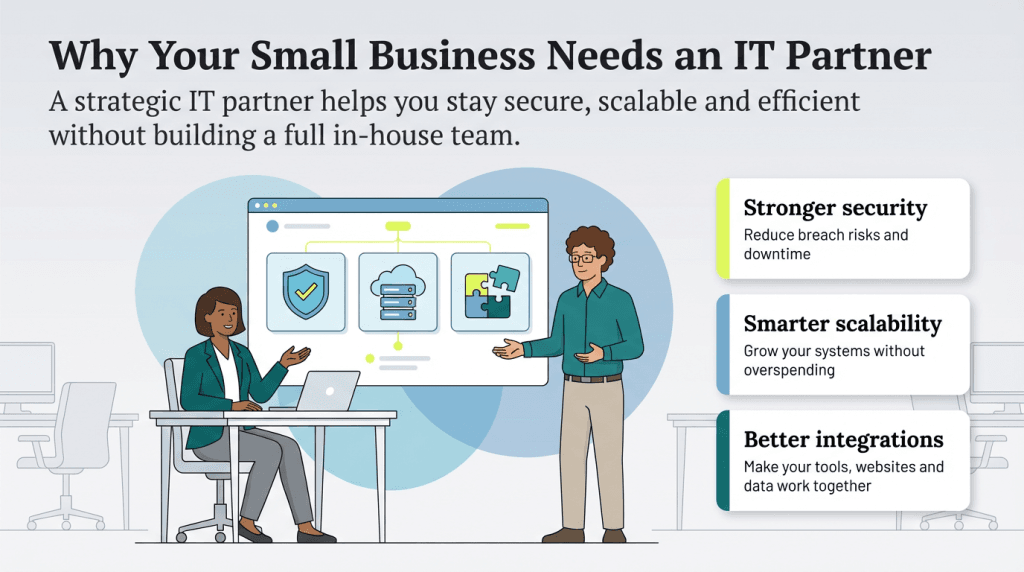

Reasons your business needs an IT partner

Collaborating with a strategic partner that specializes in IT infrastructure and processes can help SMBs save time and money.

“IT partners come in many forms but ultimately serve the same goal — assisting your business in selecting, acquiring, deploying and managing your technology platforms and services,” explained Brad Kaminski, channel director at Synology’s United States headquarters. “Depending on how much in-house IT staff exists, the role of partners can span from just sourcing and acquisition to serving as a system admin for your employees and departments.”

Consider the following reasons SMBs should align themselves with a resourceful IT partner.

An IT partner can help you develop an IT strategy.

An IT expert can help a business map out a technology growth path that aligns with its size, scale and functionality. They can also introduce you to tech solutions you may not be aware of, even if you already have an internal IT staff.

Kaminski emphasized that a dedicated IT partner brings crucial industry knowledge about the best products to execute your IT strategy — and cautioned that doing the tech yourself is much too risky when the fate of your business is at stake. “Choosing products that are robust and up to date with support and development is critical for proper IT planning,” Kaminski pointed out. “Without an IT staff, taking this on solo often results in cobbled-together consumer solutions that lack functionality, scalability and security.”

A smart IT partner will help you develop an IT strategy that supports your

business goals and aligns with your IT and corporate budgets.

An IT partner can boost network security.

Protecting your business’s sensitive information is critical for client trust and continued operations. However, preparation is the only way to avert or minimize security disasters. Your dedicated IT partner can help you prepare by devising a plan to protect your network and outlining a strategy to keep it updated with the latest security strategies.

Your IT partner can:

- Assess your business’s vulnerabilities.

- Safeguard against the unique risks you face.

- Prepare you for a worst-case scenario.

- Help you avoid disruptions, downtime and other issues.

- Outline a disaster preparedness plan.

“For cybersecurity, an IT partner can be a managed detection and response (MDR) partner or a managed security service provider (MSSP),” explained Lisa Campbell, vice president of SMB at CrowdStrike. “In both instances, these partners act as an extension of your security and IT teams.” Both MDRs and MSSPs serve crucial roles:

- MDRs: MDRs focus on proactive, hands-on threat hunting and immediate remediation.

- MSSPs: MSSPs are broader and focus more on monitoring, compliance and basic incident alerts.

If you don’t protect your business from data breaches, it’ll cost you dearly later. According to Verizon’s 2024 Data Breach Investigation Report, small businesses in the U.S. experienced 617 breaches and 919 security incidents between Nov. 1, 2022, and Oct. 31, 2023. Notably, ransomware attacks accounted for 11 percent of all incidents and were considered top threats for 92 percent of industries.

Thanks to your IT partner’s involvement, you’ll be secure in the knowledge that your systems will be available and reliable, no matter what disasters you encounter.

An IT partner can help you scale affordably.

Scalability is crucial to achieving profitable growth, but adding staff members and expanding your IT infrastructure, such as servers and software seats, can be challenging.

Fortunately, an experienced IT partner can help you scale your infrastructure up or down as needed, faster and more efficiently than handling it yourself. Additionally, due to their experience and connections, they can often access hardware and software at better prices than individual businesses approaching vendors directly. Your IT partner can also ensure the smooth installation of all new hardware and software.

An IT partner can guide you toward the best cloud solution.

The cloud can help you grow your business by providing ample storage and state-of-the-art computing power. However, different types of cloud services exist and choosing the right platform and vendor can be overwhelming.

A knowledgeable IT partner will help you select the vendors whose services offer you the storage and functionality you need at a competitive price. They’ll also handle implementation, which can minimize or eliminate potential disruptions to critical business functions.

Top cloud services for businesses include Amazon Web Services, Box, Carbonite, Dropbox for Business and Google Workspace.

An IT partner can ensure your tech works together.

Your IT partner can ensure your business software and platforms integrate and share data, whether through plug-ins, custom coding or by selecting compatible solutions. For example, the best point-of-sale systems can often work with hundreds of compatible third-party apps that can streamline your operations, as well as credit card processors, inventory programs and accounting software. Similarly, the best customer relationship management software solutions often integrate with AI tools, email marketing software, enterprise resource planning software and much more.

When your IT partner facilitates seamless integrations between your tech solutions, you can enhance productivity and simplify processes across your business.

An IT partner can improve website functionality and the user experience.

An expert IT partner can integrate your business software with your website infrastructure to fine-tune your website design, creating a great customer experience while ensuring smooth workflows and data sharing for your business.

For example, they can connect your website to your accounting software so each sale is automatically recorded and an invoice is sent to the warehouse. This integration triggers an alert for the warehouse team, notifying them of a new order with an accompanying invoice ready to include with the shipment. Additionally, your IT partner can integrate your website with inventory management software, ensuring that stock levels are updated in real time so customers know exactly what’s available. This creates a hassle-free experience for customers and efficient order processing for your team.

Your IT partner can also fine-tune your website's back end to ensure optimal

page load speeds and enhanced performance for a smooth user experience.

An IT partner can reduce operational downtime.

Unfortunately, tech issues — including software crashes, device misconfigurations, cyberattacks and more — aren’t uncommon. However, trying to resolve them on your own can lead to costly downtime as you work through solutions.

Operational downtime can be devastating. According to security company Pingdom, a small business’s average cost per minute is $427. Using the industry standard downtime calculation formula (minutes of downtime x cost per minute), one hour of downtime can cost a small business $25,620. For a business operating a standard eight-hour day, this means a single day of downtime can cost nearly $205,000.

A trusted IT partner has likely dealt with any issue you experience and knows how to address it quickly, saving your business very real time and money.

“Saving money goes way beyond the purchase price of equipment,” Kaminski noted. “Productivity and efficiency skyrocket when the right tools are in place. A dedicated IT partner will help equip your team for success and will also build a plan for unexpected obstacles.”

Kaminski also explained that an IT partner can ensure backups are in place in case of a disaster or incident, helping return operations to normal quickly. “A proper backup strategy will make sure your business can recover from disaster both natural and human made,” Kaminski added.

An IT partner provides opportunity cost advantages.

Building and maintaining a business IT infrastructure is complex and keeping it secure requires detailed knowledge and oversight. Not all business owners or team members have the time or desire to acquire these skills.

Opportunity cost is the cost of choosing one action over another. While you’ll pay your IT partner for their services, this expense may be far less than the time and cost required to learn everything from scratch and handle IT yourself. Kaminski pointed out that a skilled IT partner saves you the time and expense of learning IT fundamentals from the ground up.

“As a business grows, it faces many challenges,” Kaminski explained. “Having a dedicated IT partner who has familiarity and experience in customizing technology solutions for your particular needs will save you time, money and avoid potential disasters surrounding data security.”

An IT partner is often more affordable than an in-house IT team.

An IT partner provides 24/7 expertise and can proactively manage software upgrades, patches, hardware improvements, workflow automations, cybersecurity vulnerabilities, antivirus and security services and more. They are available during emergencies both within and outside of standard business hours. When you factor in the costs saved by avoiding downtime and lost productivity, an IT partner is often much more affordable than hiring a full-time IT employee.

“By working with a managed security partner, SMBs can gain access to enterprise-grade protection and threat intelligence, without the overhead of building an in-house team,” Campbell explained. “These partnerships can ensure proactive threat monitoring, fast incident response and the implementation of best-in-class cybersecurity technologies.”

Many businesses with an IT staff supplement it with an IT partner, benefitting from their additional expertise.

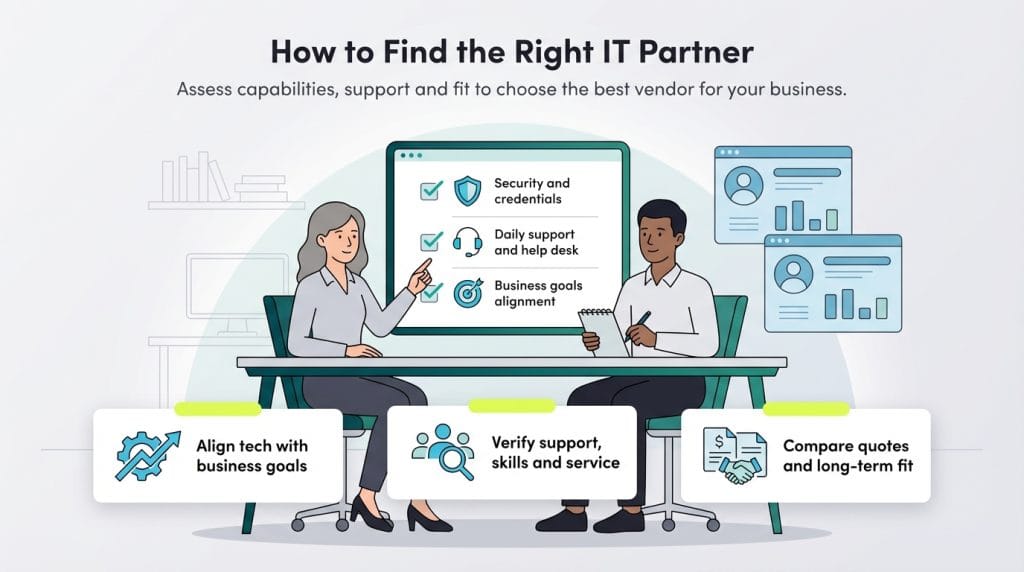

How to find the right IT partner for your business

Choosing the right outsourcing partner for your IT needs is critical. Your IT partner must be able to align your technology with your business goals to create value and ensure the smartest, most efficient use of your technology assets. Consider the following steps to ensure you partner with the right vendor.

Gather a list of potential IT partners.

When seeking an IT partner, Kaminski suggests utilizing a list of potential vendors from a trusted source. “Synology has a list of our trusted IT partners that we have already vetted and collaborated with to ensure they meet our standards,” Kaminski shared. “Using pre-qualified vendors from a company you already trust can help narrow your search and find your IT partner faster.”

Here are some other ways to build your short list of potential IT partners:

- Seek recommendations from industry peers: Connect with business owners in your industry or network to learn which IT partners they work with and trust.

- Look for online reviews: Research potential partners on sites like G2, Trustpilot or Google Reviews to see feedback from current and past clients.

- Attend industry events or trade shows: Conferences and trade shows are excellent places to meet IT providers, ask questions and gather information in person.

Decide whether or not you want a local partner.

After gathering your shortlist, decide how important your partner’s physical location is to your business. A local IT partner can be beneficial for handling equipment installation and addressing hardware, software or connectivity issues on-site. A nearby partner may also allow quicker emergency response times and face-to-face meetings to discuss your tech needs.

However, if your IT needs are primarily digital or cloud-based, you may be fine with a remote partner. Many remote IT partners provide 24/7 support, remote monitoring and troubleshooting for software and network issues via secure remote access. They can also often handle software updates, data backups and cybersecurity remotely, making a physical presence less critical.

Consider your business’s specific requirements to decide whether a local or remote partner is the best fit.

Ensure your potential IT partner can handle your goals.

Meet with promising IT partners to ensure they can handle the upgrades you envision and advise you on the best way to meet your business goals. Ask about the hardware and software you’ll need to achieve the desired outcome and see if they can provide cost estimates and implementation timelines.

Note that before presenting your IT goals to potential partners, meet with your team about their current frustrations and what technology could make their lives easier. For example, if your customer service team struggles with a slow ticketing system, an IT partner could recommend a more efficient CRM solution to streamline response times. Similarly, if remote employees face connectivity issues, your IT partner might suggest cloud-based tools for smoother collaboration.

You should also consider frequent customer complaints, such as slow page load times or website checkout issues, and present these to promising IT partners to see what solutions they propose.

Ensure your potential IT partner can handle your daily needs.

List the services you’d need your IT partner to provide on a daily or ongoing basis, such as remote monitoring, regular software updates, data backups and cybersecurity support. Do you want a 24-hour help desk? If so, ensure they can offer round-the-clock assistance to address issues as they arise.

Present your daily and ongoing needs to your potential vendor and ask how they plan to meet them. Be sure they have the resources and expertise to keep your systems running smoothly on a day-to-day basis.

Check your potential IT partner’s credentials.

By now, you likely have one or two promising candidates and it’s time to dig a little deeper to ensure they have the depth of technical knowledge and credentials necessary to do the job. Ask about IT certifications, such as Microsoft Certified, Cisco Certified or CompTIA, which demonstrate expertise in key areas. Additionally, inquire about their experience with your industry and specific systems or software you use.

Check your potential IT partner’s references.

To verify their track record, request references and case studies to see how a potential IT partner has handled projects you’re considering. Speak with their other clients to ask about the IT partner’s communication skills, responsiveness and problem-solving abilities. This feedback will give you insight into what it’s like to work with them on a daily basis and whether they’re a good fit for your business needs.

Ask about your potential IT partner’s service-level guarantees.

Ask your potential IT partner about specific service-level guarantees, such as response times for support queries, on-site visits and issue resolution. Also, inquire about hardware and software warranties. Clear service-level agreements ensure your IT partner is accountable and help minimize costly downtime for your business.

Get quotes and make your final decision.

At this point, a clear winner is likely emerging. However, their quote must align with your budget and needs. Still, although price is important, prioritize selecting the provider who best understands your needs and has the availability and responsiveness you require.

Jeremy Bender contributed to this article.